Cost-Efficient Data Engineering

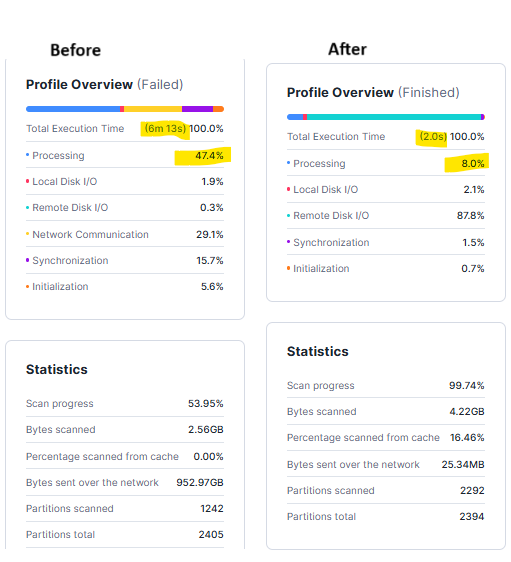

Optimizing Snowflake costs for high-volume Airflow-DBT pipelines, achieving 17% overall reduction and 40% warehouse savings.

Overview

A client was experiencing high Snowflake costs due to inefficiencies in their DBT jobs and warehouse configurations. With over 500+ daily DBT jobs, running for approximately 13 hours with a maximum parallel execution of 39 jobs, optimizing performance while reducing costs was a critical need.

Through a combination of warehouse right-sizing, query optimizations, and improved execution monitoring, we helped the client achieve 17% cost savings without compromising performance.

Challenges Identified

Challenges Identified

The client faced significant cost inefficiencies in their snowflake environment.

High Snowflake Warehouse Costs

The warehouse was running at an overprovisioned capacity, leading to unnecessary expenditure.

Idle Compute Costs

Warehouses were not being suspended efficiently, leading to cost leakage.

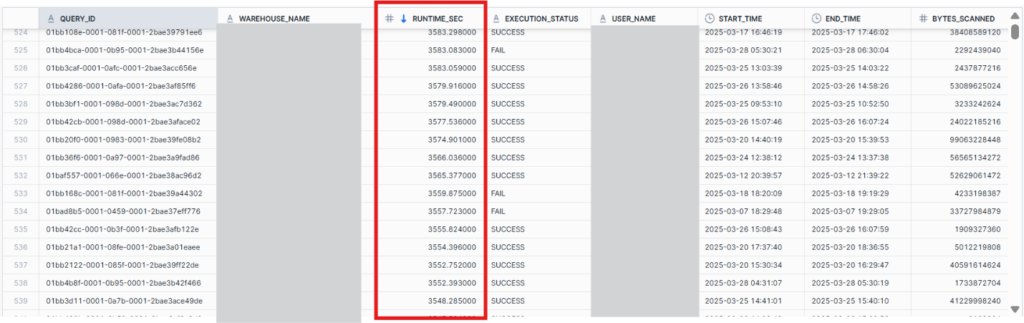

Long-Running Queries

Inefficient SQL queries were increasing execution times and resource consumption.

Ineffective Model Design

Some DBT models were running as full refresh instead of incremental processing, leading to unnecessary recomputation.

Actions Taken to Reduce Costs

Actions Taken to Reduce Costs

We implemented a multi-faceted approach to optimize both Snowflake configuration and DBT model execution.

Key features

Warehouse Optimization

Optimizing warehouse configurations for better cost efficiency.

- Reduced Warehouse Size: Changed from Medium to Small for better cost efficiency.

- Enabled Auto-Suspend: Configured warehouses to auto-suspend after inactivity.

- Right-Sized Warehouses for Different Workloads: Optimized usage based on concurrency needs.

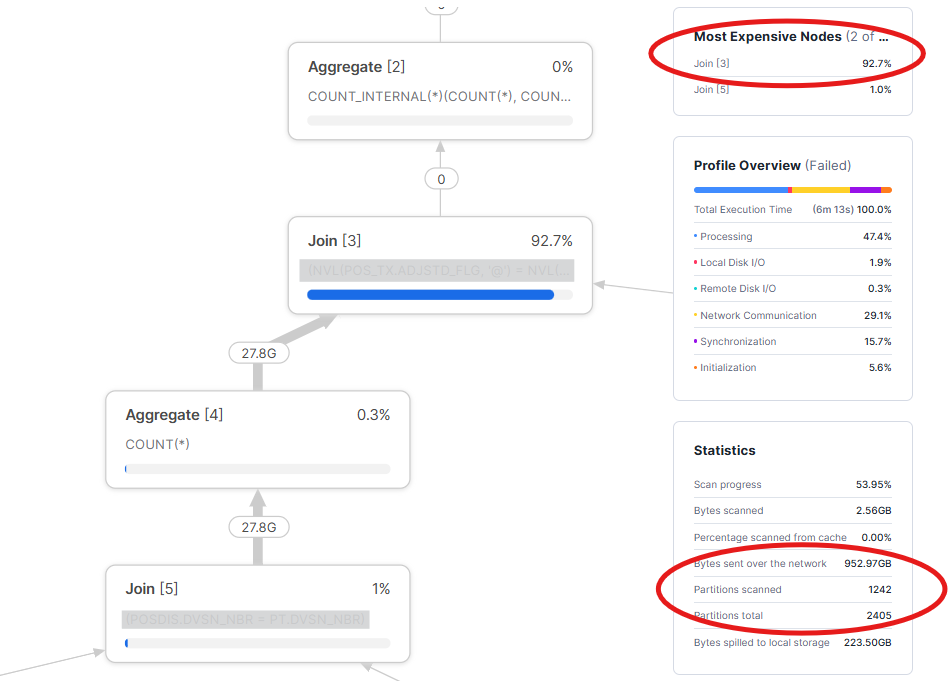

Identifying Costly Queries

Tracking and optimizing long-running queries.

SELECT query_id, warehouse_name, total_elapsed_time/1000 AS runtime_sec, execution_status, user_name, start_time, end_time, bytes_scanned

FROM snowflake.account_usage.query_history

WHERE start_time >= 'YYYY-MM-DD HH:MM:SS' -- DBT start time

AND end_time <= 'YYYY-MM-DD HH:MM:SS' -- DBT end time

ORDER BY start_time;DBT Model Optimization

Improving DBT model efficiency and performance.

- Converted Full Refresh Models to Incremental: Reduced unnecessary recomputation.

- Optimized SQL Joins: Ensured joins were correctly partitioned and indexed.

- Split Large Models: Divided SQL with heavy dependencies (e.g., 19 tables) into intermediate models.

- Used Materialized Views: Leveraged Snowflake caching for frequently accessed aggregated data.

Cost Analysis and Performance Monitoring

Tracking and analyzing cost patterns.

- Identified Cost Patterns in DBT and Snowflake using run_results.json and DBT Cloud API.

- Determined cost distribution and peak usage times.

- Differentiated between DBT inefficiencies vs. Snowflake configuration issues.

DBT Execution Time vs. Snowflake Cost Analysis:

Scenario 1: Cost Spreading Across the Day

- Possible causes: Idle warehouse usage, background jobs.

- Solution: Determined cost distribution and peak usage times.

Scenario 2: Cost Peaking During DBT Runs

- Possible causes: Expensive queries, inefficient execution plans.

- Solution: Query optimization, warehouse right-sizing, caching strategies.

Additional Optimization Strategies Implemented

Warehouse Scaling & Configuration

Avoided over-provisioning and used multi-cluster warehouses selectively for peak concurrency.

Caching & Query Optimization

Leveraged Snowflake's result cache and implemented clustering keys for large table scans.

Concurrency & Load Management

Optimized query concurrency, reducing max parallel queries from 39 to 25.

Benefits Realized & Measured Impact

Benefits Realized & Measured Impact

Reduced Warehouse Costs

Eliminated overprovisioned capacity and unnecessary expenditure.

Improved Query Performance

Achieved through optimized SQL and transition to incremental models.

Eliminated Idle Costs

By enforcing strict warehouse suspend policies.

Reduction in DBT Runtime

Reduced from 13 hours to 9 hours by optimizing joins and clustering.

Execution Monitoring

Enhanced systems to detect cost-intensive queries before they escalate.

Concurrency Improvement

Reduced max parallel queries from 39 to 25 while maintaining throughput.

Conclusion

Conclusion

By leveraging query optimization, warehouse right-sizing, and execution monitoring, we successfully helped our client reduce daily Snowflake costs by 17% while maintaining performance.

This case study demonstrates how small changes in DBT and Snowflake configurations can lead to substantial cost savings and efficiency gains.

For organizations looking to optimize their Snowflake costs, a structured cost analysis and optimization strategy is key to achieving long-term savings.

Empower Your Business With Cutting-edge IT Solutions

Unlock Innovation and Growth with Our Expert Solutions